Discover the Key Digital Marketing Trends for 2025 and Beyond

Forecasting the Future: Key Digital Marketing Trends for 2025

What Will 2025 Bring for Digital Marketers?

The digital marketing landscape is evolving rapidly, driven by technological advancements and changing consumer expectations.

In marketing, staying ahead of the latest trends is crucial for maintaining a competitive edge.

This article provides an updated forecast of key digital marketing trends for 2025, ensuring you are well-prepared for the challenges and opportunities ahead.

Let’s dive into the most important trends shaping digital marketing in 2025:

1. AI-Driven Hyper-Personalization

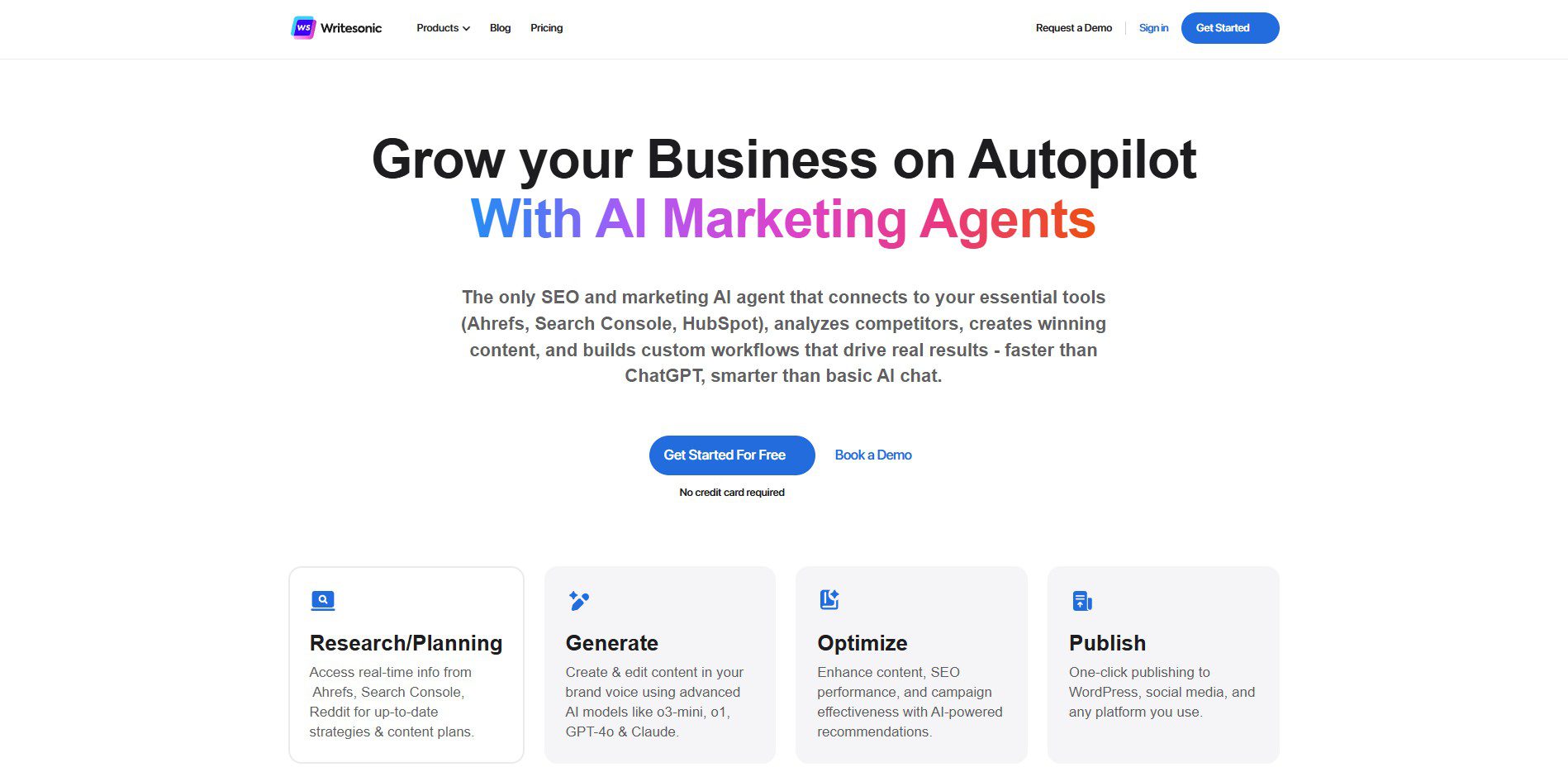

Artificial Intelligence (AI) revolutionizes marketing by enabling more sophisticated and personalized consumer interactions.

AI algorithms analyze vast amounts of data to understand consumer behavior, preferences, and intent, allowing marketers to deliver hyper-personalized content, product recommendations, and advertising.

Expect AI to drive dynamic and real-time personalization in customer interactions, increasing engagement and conversion rates.

2. Voice and Conversational Search Optimization

With the continued rise of smart speakers and virtual assistants like Amazon Alexa, Google Assistant, and Apple Siri, optimizing for voice search is no longer optional.

Consumers use more natural language queries, meaning marketers must adapt their SEO strategies to target long-tail, conversational keywords and structured data markup to improve search rankings.

3. The Continued Dominance of Video Content

Short-form video platforms like TikTok, YouTube Shorts, and Instagram Reels shape how brands communicate with audiences.

Marketers must invest in engaging, high-quality video content that captures attention quickly.

Additionally, AI-generated video tools are becoming more advanced, allowing for scalable video production without sacrificing quality.

4. The Rise of Interactive Content and Gamification

Interactive content will increase adoption, including quizzes, polls, surveys, and AR/VR experiences.

Brands will leverage gamification strategies to boost engagement and retention. Immersive experiences, such as virtual try-ons in e-commerce, will become mainstream, enhancing the customer journey and increasing conversions.

5. The Growth of Social Commerce

Social media platforms are rapidly evolving into powerful e-commerce channels.

Features like in-app checkout, live shopping, and AI-powered shopping assistants are streamlining the customer journey, enabling seamless transactions directly from social media feeds.

Brands will integrate social commerce more deeply into their digital marketing strategies.

6. Privacy-First Marketing and First-Party Data Strategies

With stricter data privacy regulations (such as GDPR and CCPA) and the decline of third-party cookies, marketers are shifting toward first-party data collection.

Businesses will prioritize transparency, consent-driven data collection, and secure data management to build consumer trust while maintaining effective marketing strategies through AI-driven insights.

7. The Evolution of Influencer Marketing: Micro & Nano-Influencers

Consumers seek authentic, relatable content, making micro- and nano-influencers (those with smaller but highly engaged followings) more valuable for brand collaborations.

These influencers offer higher engagement rates and a more community-driven approach than traditional celebrity endorsements.

8. Customer Experience (CX) Optimization with AI

Customer experience is becoming a competitive differentiator. AI-powered chatbots, predictive analytics, and real-time customer support will be widely adopted to enhance user interactions.

Businesses will prioritize seamless omnichannel experiences, ensuring consistency across websites, social media, email, and mobile apps.

9. Augmented Reality (AR) and Virtual Reality (VR) Marketing

AR and VR technologies are transforming digital marketing by offering immersive brand experiences.

From virtual store walkthroughs to interactive product demonstrations, these technologies will bridge the gap between online and offline shopping experiences, boosting consumer engagement and purchase intent.

10. Sustainability and Ethical Branding

Consumers increasingly favor brands demonstrating corporate social responsibility (CSR) and sustainable practices.

Ethical branding, sustainability initiatives, and purpose-driven marketing will be crucial in shaping brand perception.

Companies that authentically align with social and environmental causes will build stronger customer loyalty and trust.

❓ FAQs: Key Digital Marketing Trends for 2025

What are the top digital marketing trends for 2025?

The key trends include AI-driven personalization, social commerce growth, voice search optimization, short-form video dominance, and AR/VR marketing.

How will AI impact digital marketing in 2025?

AI will enhance hyper-personalization, automate content creation, improve customer segmentation, and provide predictive analytics for better marketing strategies.

Why is social commerce important for marketers?

Social media platforms are integrating e-commerce features, allowing brands to sell directly through apps, streamline shopping experiences, and increase conversions.

How can businesses prepare for voice search optimization?

They should use conversational keywords, optimize for featured snippets, and structure content to align with how people naturally ask questions via voice assistants.

What role does video content play in 2025 marketing?

Short-form videos on TikTok, YouTube Shorts, and Instagram Reels will dominate, requiring brands to create engaging, bite-sized content for higher engagement.

How are data privacy regulations affecting digital marketing?

With stricter GDPR and CCPA rules, brands must focus on first-party data collection, transparent consent management, and privacy-first marketing strategies.

What is the significance of micro- and nano-influencers?

Smaller influencers with engaged audiences provide authentic brand promotion, higher trust levels, and better niche targeting compared to celebrity endorsements.

How will AR and VR shape marketing strategies?

To boost conversions, brands will use AR/VR for immersive shopping experiences, virtual try-ons, interactive storytelling, and gamified brand engagement.

What is sustainability’s role in digital marketing?

Consumers prefer eco-conscious brands. Companies embracing sustainability and social responsibility will gain customer trust and long-term brand loyalty.

What should businesses do to stay ahead in digital marketing?

Stay updated on trends, leverage AI, create engaging content, prioritize data privacy, optimize customer experiences, and embrace emerging technologies.

Conclusion – Key Digital Marketing Trends for 2025

Digital marketing in 2025 will be defined by AI-driven personalization, emerging technologies, and a stronger focus on customer experience and ethical considerations.

Staying ahead of these trends will enable businesses to create meaningful connections with their audiences while driving growth and innovation.

By embracing these changes, marketers can ensure that their strategies remain relevant and practical even in this ever-changing digital environment.

📚 Related Posts You May Be Interested In

- Augmented Reality vs Virtual Reality: Revolutionary Tech in 2024 ⬈

- Mixed Reality in 2025 – Where Virtual and Real Worlds Collide ⬈

- Web 3.0 and Decentralization: The Evolution of the Internet ⬈

- Blockchain: Revolutionizing Supply Chains and Finance ⬈

- AI-powered Solutions for Sustainable Energy Efficiency ⬈

- Traditional vs Digital Marketing: Key Differences Explained ⬈

- When Digital Marketing Meets AI – Strategies for the Next Era ⬈

This article is also part of the Definitive Guide to Brilliant Emerging Technologies in the 21st Century ⬈.

Thanks for reading.

Resources – Key Digital Marketing Trends

- Augmented Reality (AR) – Wikipedia ⬈

- General Data Protection Regulation (GDPR) – Wikipedia ⬈

- Influencer Marketing – Wikipedia ⬈

- Personalized Marketing (CCPA) – Wikipedia ⬈

- Virtual Reality (VR) – Wikipedia ⬈

ℹ️ Note: Due to the ongoing development of applications and websites, the actual appearance of the websites shown may differ from the images displayed here.

The cover image was created using Leonardo AI ⬈.